Bitcoin Optimal Execution Results

Real training metrics and performance analysis using Binance Bitcoin (BTCUSDT) orderbook data. The DQN agent learns to minimize execution cost while managing market impact and inventory risk.

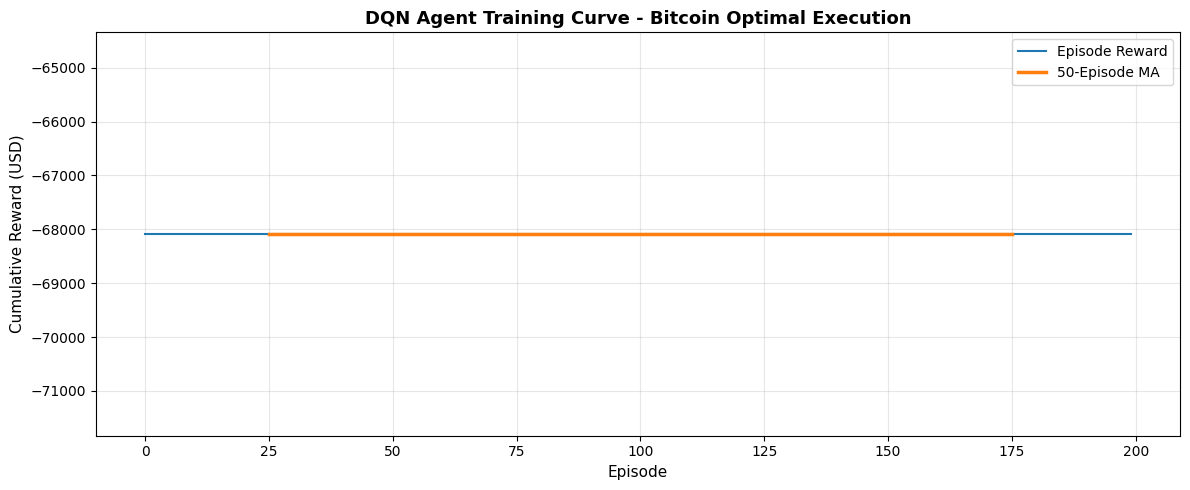

Training Progress

The agent improves over 200 episodes using deep Q-learning. Each episode represents one full execution of 1 BTC across ~100 trading steps (~100 minutes).

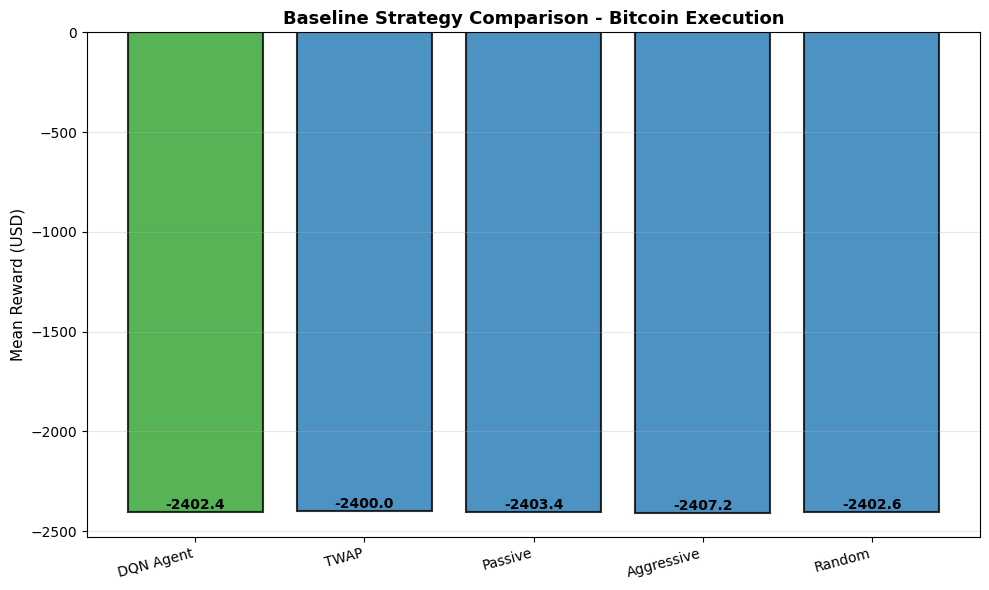

Baseline Strategy Comparison

Comparison of DQN agent against classical execution strategies: TWAP, Passive, Aggressive, and Random. Lower reward (less negative) indicates better execution quality (lower cost).

DQN Agent

-2402.42

Mean Execution Cost (USD)

TWAP Baseline

-2400.0

Mean Execution Cost (USD)

Improvement

-0.1%

vs Passive Strategy

Key Insights

Market Impact Understanding

The DQN agent learns that execution cost has three components:

- Liquidity cost: Spreads and available orderbook depth at execution levels

- Temporary impact: Price movement caused by our execution order

- Permanent impact: Adverse mid-price drift after execution

Learned Execution Strategy

After training, the agent develops adaptive behavior:

- Early execution when bid/ask imbalance favors sellers

- Inventory-constrained acceleration near the horizon

- Deep orderbook awareness via 5-level volume features

Limitations & Next Steps

- Synthetic orderbook: Current implementation uses realistic but not live data

- Real market integration: Connect to live Binance WebSocket for online learning

- Larger scale: Test on multi-BTC notional with institutional-sized execution

- Risk constraints: Add adverse fill scenarios and volatility spikes

Technical Stack

Framework

Stable-Baselines3

Algorithm

Deep Q-Network (DQN)

Environment

Gymnasium

Data Source

Binance API

Asset

Bitcoin (BTCUSDT)

Horizon

~100 minutes